Robot warriors have one big advantage: they can be programmed not to shoot unarmed Afghans.

Subscribe now for unlimited access.

$0/

(min cost $0)

or signup to continue reading

They would not embrace a "distorted culture", to use the words of the head of the Australian Defence Force, General Angus Campbell, when he described the mindset of those troops who "took the law into their own hands" and killed captives.

Machines have lots of advantages. They can think and see a lot faster than humans - at "hypersonic speed", as the ADF describes it in its study of the implications of a big increase in robots on the battlefield.

In the dry analytic language of the ADF: "In future operations, actions that occur at hypersonic or machine speeds will not allow sufficient time for human decision-makers to generate an awareness of the environment and analyse options."

When the computer AlphaGo beat the human world champion at the immensely intricate Asian game of Go, the humans who had created the computer to play games said they were amazed that it had discovered rules in some games which the humans hadn't even known existed. The computer discovered previously unknown rules and exploited them to its advantage.

Winning a game of Go is one thing, flying computers with guns and bombs to destroy people and property quite another. The writing of the rules in the computer's brain is a matter of life and death.

It's the writer of software who will make instantaneous decisions about morality - about what is the right thing or the wrong thing to do - and not the pilot in the air above the city (or even back behind the screen flying the drone from Headquarters Joint Operations Command outside Canberra or wherever on this planet it might be).

These are decisions about pressing the trigger or not, and they need to be debated and scrutinised.

The military and companies, particularly in the defence industry, are not natural engagers in public debate. Secrecy is their natural mode. They need to shake off their shyness.

There are risks as we enter that scary world.

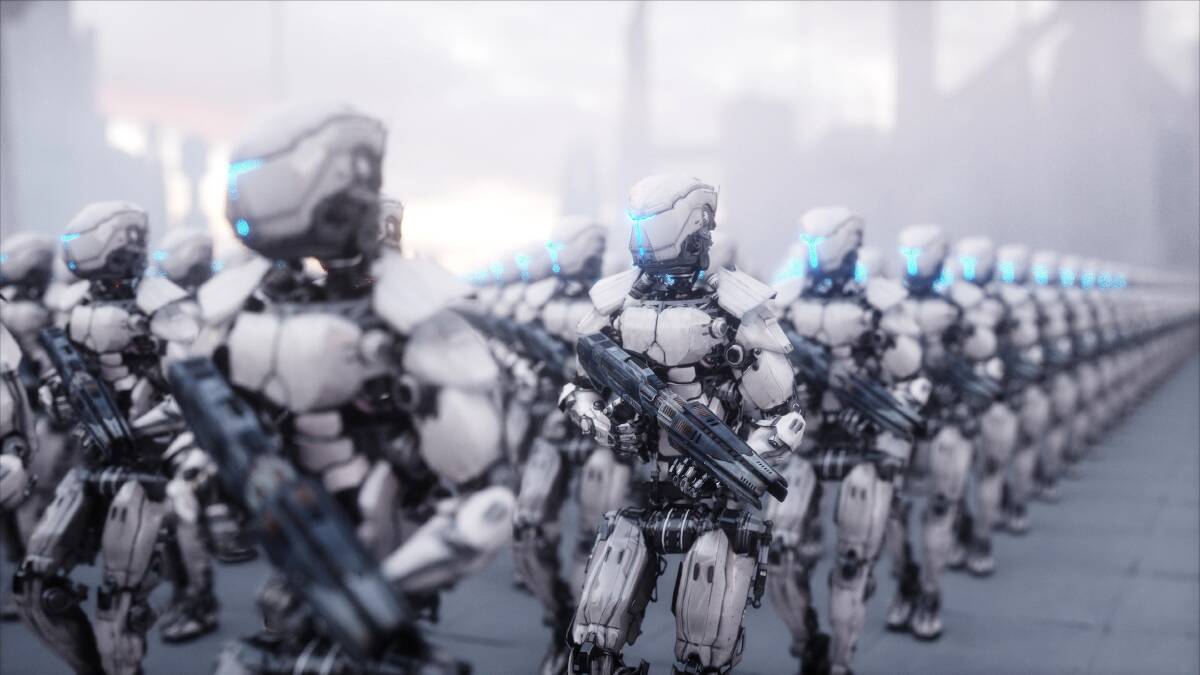

One is to view this science fiction as some sort of cyberpunk apocalypse in which the machines become brighter than we are and decide that they are the masters.

The ending of RoboCop, you may remember, involves the heavily armed robot entering the company boardroom and confronting the chairman, with deliciously bloody results.

Awesome, intelligent machines programmed to kill may seem inhumanly repulsive, but they offer those who defend Australia big advantages - and sure as eggs is eggs, such machines are being developed in Russia, China and other places which might not have our best interests at heart.

There is another risk: the military may get seduced by the truly amazing power of robots.

They offer the Australian Defence Force a vast increase in firepower for the same money - much more bang for the buck. They do the same for the People's Liberation Army in China. Falling in love with military hardware - "toys for boys", as it's sometimes disparagingly called - is hard to resist.

READ MORE:

We need to think about the ethics before we let the robots loose. We need to keep our (male) enthusiasm in check.

There is one other, more subtle danger. The most prominent robot warrior so far in the ADF's arsenal will be the "Loyal Wingman", a pilotless, thinking fighter plane being built by Boeing.

Boeing is a commercial company. It makes an array of amazing machines to the great benefit of humankind - but its primary aim is to make profits, albeit by making machines which others want to buy.

We saw the company's dilemmas with the Boeing 737.

In a fierce commercial market, Boeing seems to have reacted to advances made by its great competitor Airbus by adapting its existing 737. A series of small adaptations eventually resulted in an aircraft which was radically different from the original design - but which hadn't gone through the rigorous regulation which a new design would need.

Adaptation rather than radical redesign saved money. It meant pilots weren't compelled to completely retrain, and that was a huge cost advantage - but it also meant that the pilots of Lion Air Flight 610 and Ethiopian Airlines Flight 302 didn't know how to react when machinery malfunctioned, and 346 people died.

As robots take up arms on our behalf, we need to make sure that the philosophers and thinkers about right and wrong have as much weight in their creation as the accountants and the generals.

The time to think about ethics and robots in war is before they are built. Not in the royal commission after they have run amok.

- Steve Evans is a Canberra Times reporter.