It's the year 2029 and the planet has been reduced to rubble after a nuclear war.

Subscribe now for unlimited access.

or signup to continue reading

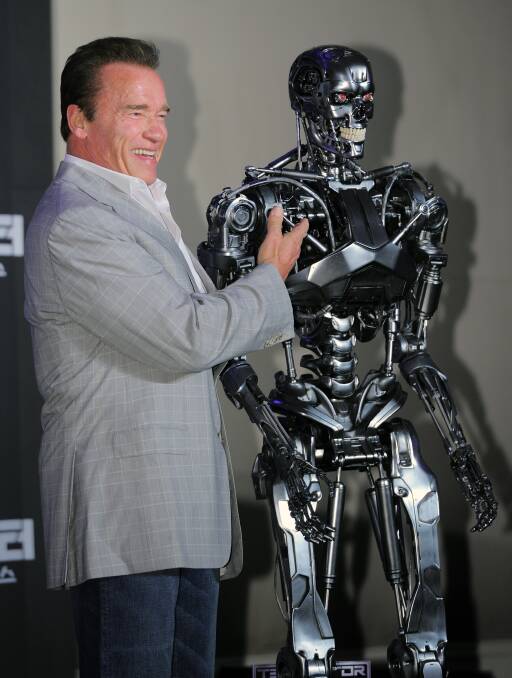

Killer robots, ones played by Arnold Schwarzenegger types, seek to kill off the last remains of humanity.

In this world, humans have created an artificial intelligence system, which after being embedded in defence systems and weaponry, becomes self-aware and eager to get rid of its fleshy overlords.

Thankfully, experts say we're a long way off Terminators taking over battlefields, and later cities.

But around the world, lethal autonomous weapons, still relatively in their infancy, are already being put to use.

The Switchblade drone - a type of "kamikaze" drone, which explodes on impact - has been deployed in the conflict in Ukraine.

The Australian Defence Force is also set to acquire a fleet of 13 unmanned aerial vehicles from 2024-25.

The autonomous aircraft, named the MQ-28A Ghost Bat, or Loyal Wingman, will be able to conduct surveillance and combat missions solo or semi-crewed.

Military ethicist Professor Deane Baker from UNSW says despite these advancements in capability, artificial intelligence and autonomous weaponry still falls well short of what science fiction suggests.

"In many ways, [movies] massively overestimate the capabilities that are in the foreseeable future," he says.

"A more appropriate analogy is weaponised animals.

"All of the autonomous weapon systems are sort of like that - they're fairly limited in their capacity. We wouldn't replace a soldier with them but they offer a means to expand a human operator's capabilities."

Still, questions remain over how to govern autonomous systems, and who takes the blame when they fail.

Bianca Baggiarini, a military sociologist at the Australian National University, hopes these questions will remain in focus as technology leaps forward.

She says the global race to develop new and improved equipment moves at increasing speeds while the approach to the laws, regulations and ethics guiding them are slow, laborious processes.

"Rapid [technological] growth is likely to increase the tempo and pace of warfare to a point where humans can no longer keep up," Dr Baggiarini says.

"So decision time in the context of a hypersonic missile, for example, could be seconds.

"The challenge will be putting ethics and law at the start of the conversation."

MORE DEFENCE REVIEW:

- No time to lose: work on Adelaide submarine yard will begin this year | Bradley Perrett

- NATO's growing interest in 'lifeblood of global economy' | Sarah Basford Canales

- 'Urgent': northern bases need immediate toughening | Bradley Perrett

- Diplomacy must lead Australia's efforts in the Pacific | Mihai Sora

- The new Defence Strategic Review sees climate as a security risk | Peter Dunn, Greg Mullins

Dr Baggiarini says much of the technology is still used for relatively banal tasks, rather than autonomously hunting down enemy targets and destroying them.

Artificial intelligence is mostly used to assist human operators in acquiring and analysing information, object detection and identification and to provide decision support, she says.

But as machine learning continues to develop, multilateral organisations are looking to how it can be regulated in combat.

The Department of Defence attended the Responsible use of AI in the Military Domain summit held in The Hague earlier this year. It publicly committed to putting the responsible use of AI high on the political agenda, alongside more than 50 other countries.

The National Atlantic Treaty Organisation has also developed its own framework in 2021 for the responsible use of the technology and it expects to deliver a certification standard later this year for industries and institutions to adhere to.

Those six principles include lawfulness, responsibility and accountability, explainability and traceability, reliability, governability and bias mitigation.

NATO assistant secretary-general Baiba Braze told The Canberra Times in April active discussions were underway between Australian and NATO officials over the principles.

The Defence Department also earlier entered into a $4 million contract with the UNSW to deliver research into the ethics of using artificial intelligence in military, though the work was scrapped in 2021 before being fully completed.

Dr Baggiarini says it's important research on the ethics of its use in military contexts continues at a similar pace to the technology itself.

Being a new frontier in technology, she warns of the many possible negative implications that could play out in real-life scenarios if left untested.

"Machine learning is non-deterministic so this means that while humans set some initial parameters, the system learns on its own through data," she says.

"Machine learning does best in controlled and predictable environments and so we are told time and time again that warfare is unpredictable.

"We need to understand the implications of inserting a technology that performs best in controlled contexts into an environment that is notoriously unpredictable."

MORE DEFENCE REVIEW:

Professor Baker said the future of AI in combat doesn't have to be all doom and gloom - or Terminator and I, Robot.

He believes it's important to remember that autonomous technology could mean fewer human casualties in the battlefield.

"We tend to focus on the ethical risks and that's entirely appropriate, however, those risks must always also be weighed against the positives," he says.

"We mustn't overlook that these are - used in the right way - potentially life-saving capabilities."

With much of the work underway behind the scenes within government, Dr Baggiarini says the first positive step will be bringing the public along in the journey.

"I think that too often security and defence practices and decisions are made of closed rooms," she says.

"Transparency and publicity of these decisions, and these processes is probably step one.

"I think it's really important to make sure that ordinary people are involved and are aware of what their military is doing."