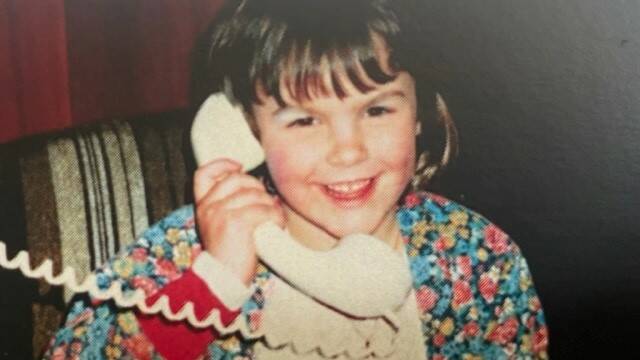

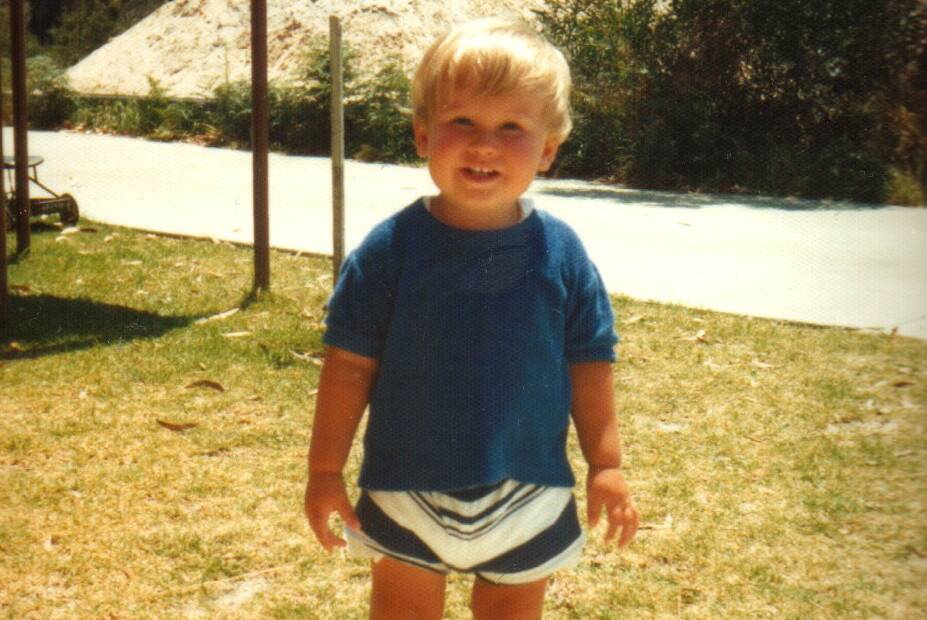

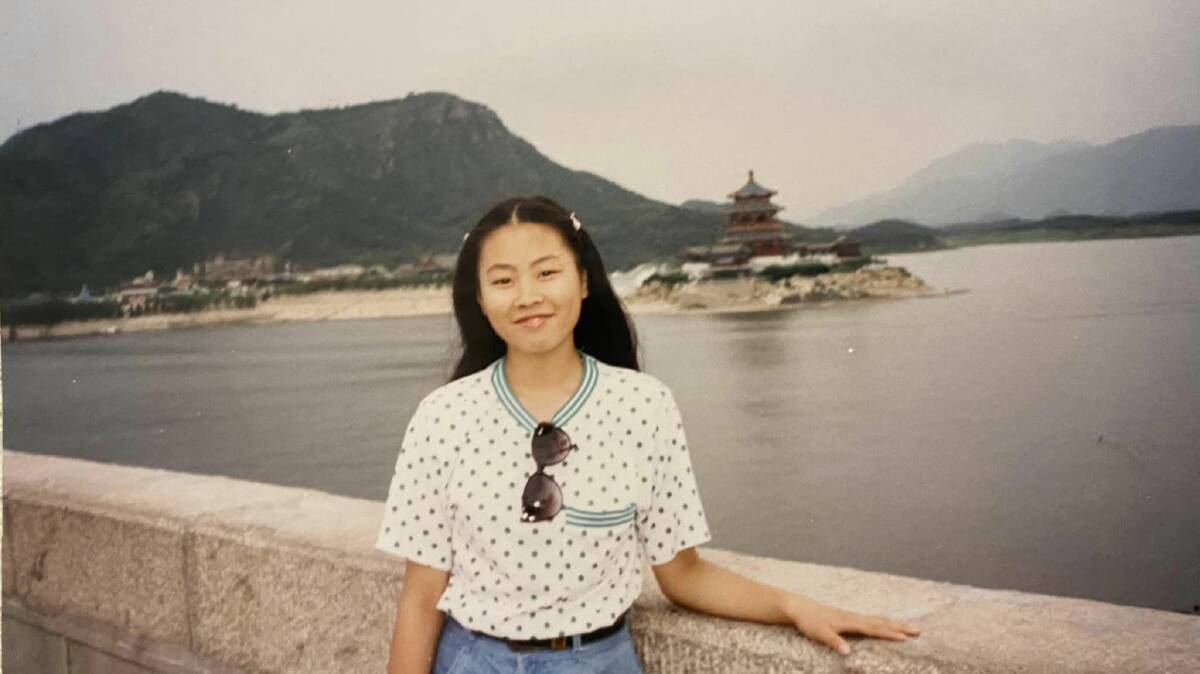

Australian adults are being asked to upload pictures of their younger selves to help software developers build a world-first artificial intelligence (AI) tool designed to detect child abuse material online.

Subscribe now for unlimited access.

$0/

(min cost $0)

or signup to continue reading

The crowdsourcing project, a collaboration between Monash University and the Australian Federal Police, will collect images on the My Pictures Matter webpage.

These images are used to develop an ethical AI which scans videos and images shared on the internet or dark web for suspected child abuse.

Police can also use this tool to search the devices of alleged offenders.

AFP Deputy Commissioner Lesa Gale said the project needs around 100,000 pictures of Australians of all ethnicities aged 0 to 17-years-old before it can launch.

"By having access to ordinary, everyday photographs, the AI tool will be trained to look for what is different and identify unsafe situations, flagging potential child sexual abuse material,'' AFP Deputy Commissioner Lesa Gale said.

"The AFP and Monash University are asking adults to provide pictures of themselves in their youth - not images of their children - because consent is important," she said.

"We also do not want to source images from the internet because children in those pictures have not consented for their photographs to be uploaded or used for research."

Deputy Commissioner Gale said the project will spare officers the awful task of watching children being sexually abused for investigative purposes "day-in, day-out".

Participants are free to withdraw their childhood photos from the dataset if they change their mind, My Pictures Matter project head Dr Nina Lewis said.

IN OTHER NEWS: