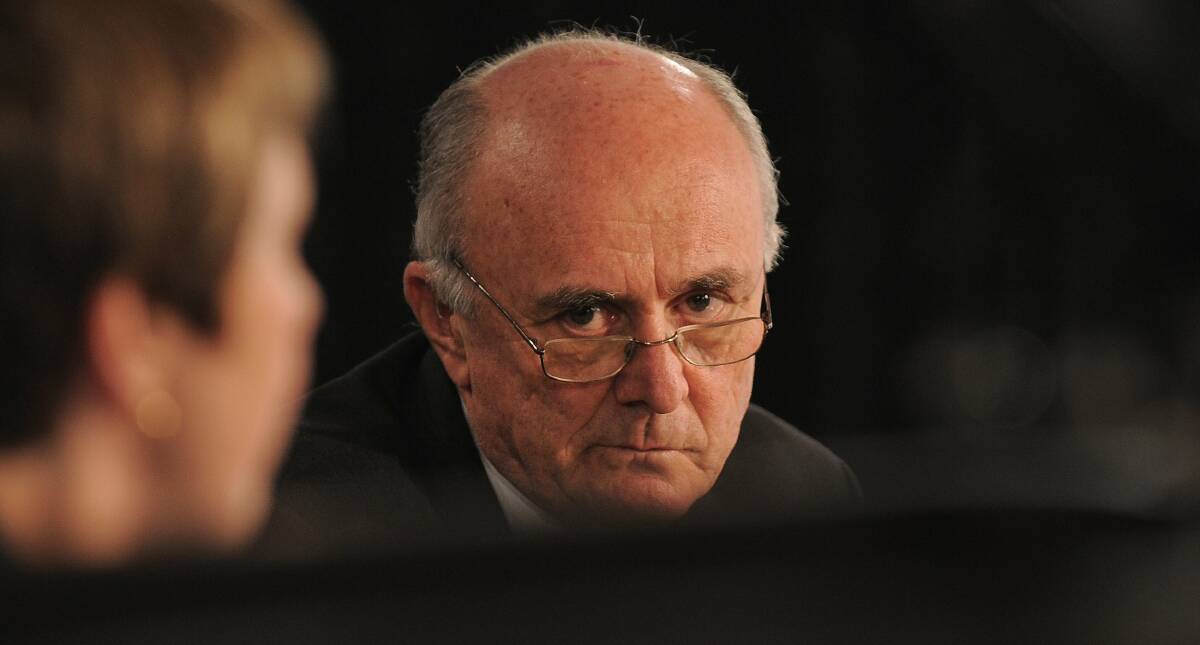

Former competition tsar Professor Allan Fels has called on the Albanese government to stop Meta from spreading fake news with its new AI function.

Subscribe now for unlimited access.

$0/

(min cost $0)

or signup to continue reading

The Canberra Times revealed this week that Meta AI is purporting to summarise paywalled news articles, but delivering wildly inaccurate and, at times, defamatory content to Facebook and Instagram users.

Professor Fels, former Chair of the ACCC, said the government must "take steps to make Meta responsible" for its latest foray into spreading disinformation and misinformation.

"The public should be highly concerned at this development," he told this masthead.

"Clearly, someone has to be held responsible when stories like this occur."

Communications Minister Michelle Rowland is seeking the advice of the ACCC and the Treasury on a potential move to force Meta to pay media companies for news, as experts say social media companies' misuse of journalistic content must be stopped.

The social media company was unapologetic when asked to explain its conduct this week, admitting in a statement that "AI might return inaccurate or inappropriate outputs" while sidestepping the issue of damage this can cause. Meta will stop paying media companies for news when existing agreements expire this year.

Media law expert Tomas Fitzgerald, a lecturer at the Curtin School of Law, said Meta was "scraping the web and taking published work as data to train their models, without acknowledgement or compensation".

"This is pretty extraordinary," he told The Canberra Times, describing the fake news output of Meta's new chatbot as an "AI hallucination".

He said companies like Meta were misrepresenting to users what the AI is doing, presenting AI "as though it is intelligent, and 'understands' what is being generated".

In reality, he said, the technology is designed to "generate plausible-sounding outputs without any regard to the meaning of the words".

Meta's AI was simply guessing at what a summary of the news article would say, he explained, warning that its behaviour in spreading false information was "dangerous".

"It is like putting the old-school troll farms - where many people were paid to generate misinformation on social media - on steroids," he said.

The so-called summaries of news articles written by journalists are generated when users click on the "ask me anything" function, represented by a blue circle within Facebook or Messenger.

When asked to summarise an exclusive, paywalled New York Times report on a meeting between Donald Trump and Ron DeSantis at Hollywood, Florida, Meta AI wrongly claimed the meeting happened at Mar-a-Lago, Trump's Palm Beach resort.

Bizarrely, the AI chatbot quoted conflicting sources describing the meeting as both "cordial" and "tense".

On some occasions, Meta AI will state that a paywalled article can't be summarised, but it can easily be persuaded with a follow-up prompt asking it again.

Mr Fitzgerald said it was "well past time that regulators put a stop to this behaviour" and that the government should designate Meta as a publisher under the News Media Bargaining Code.

"Meta was given an enormous second chance after the debacle when it blocked Australian news websites in 2021," he said.

"It seems unlikely that the government has any way forward other than using stronger compulsory regulation."