Am I the only person who thinks the scariest thing about The Terminator franchise wasn't Arnold Schwarzenegger's acting or Linda Hamilton's biceps? For years I couldn't stop thinking about the idea of Skynet, the AI overlord of the franchise, if you like, and how, once machines became sentient things, the world would came crashing down around us.

Subscribe now for unlimited access.

$0/

(min cost $0)

or signup to continue reading

"Once it got smart," says the character Kyle Reese, "it saw all humans as a threat ... and decided our fate in a nanosecond."

I get it. It's a film. Or six films (which have grossed more than $2 billion since 1984 when the initial one was released). But when August 29, 1997, passed without a hitch I'm not afraid to admit I was somewhat relieved.

But perhaps our judgement day is closer than we think.

Artificial intelligence is all around us, we rely on technology almost every minute of the day. Are you reading this on your phone? Have you asked Siri what the weather will be today? Have you searched the web for kitten memes? Have you popped Netflix on to line up The Terminator so you can watch it later tonight?

It's worrying when the tech bros start worrying too.

Earlier in May, Dr Geoffrey Hinton, the man many consider the "godfather of AI" quit Google, so he could "speak freely" about the dangers of AI. He'd been with the company for a decade, pioneering systems such as ChatGPT. Now he's sending a warning that they could become more intelligent than humans.

As far back as 2014, Stephen Hawking issued a warning about the direction AI was heading.

Even Elon Musk is worried that we're not taking AI seriously enough, saying that Google's approach, under co-founder Larry Page, has "great potential for good, but there's also potential for bad".

But like most of us, award-winning journalist Tracey Spicer didn't give such things much thought until one day her then 11-year-old son Taj asked if he could have a robot slave.

He'd been watching an episode of South Park where Cartman gets an Amazon Alexa and proceeds to order it around in language full of insults, abuse and sexual innuendo.

"I watched the episode and Cartman was treating Alexa appallingly," she says.

"And I had this light-bulb moment, realising that all the biases of the past, this idea of women and girls being servile in society, is being built into the chat bots that are running our futures."

She issued her son a firm no, but couldn't stop thinking about the implication of misogyny being embedded into machines.

"Does this mean they're beset with other types of bigotry?" she asked herself. "Are racist, homophobic, ableist, transphobic and ageist attitudes also evident?"

She decided she needed to find out more and for the next six years when deep, deep down the rabbit hole "tracing the constellation of technologies known as artificial intelligence".

She wanted to find out who was building the beast, what we could do to mitigate the damage, which organisations should be held to account.

"At least in Mary Shelley's classic work of horror, Frankenstein realises the error of his ways before dying while fleeing from his monster," she says.

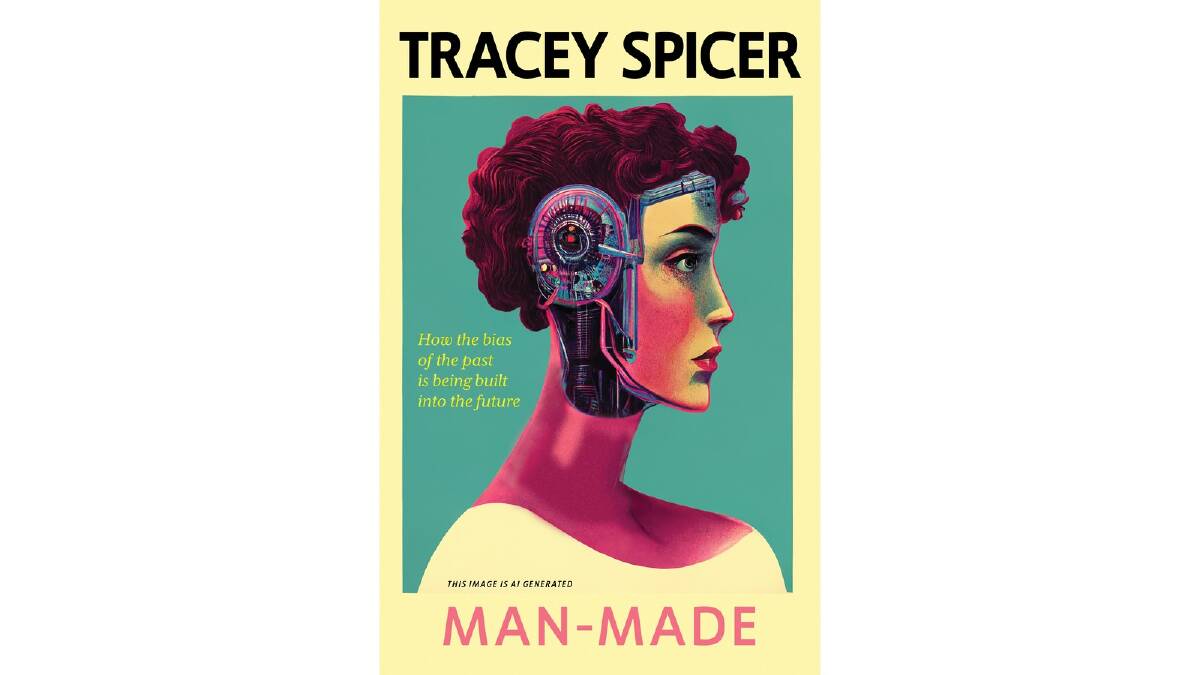

Spicer's Man-made: How the bias of the past is being built into the future is the culmination of that research.

Spicer is happy to admit she's a digital newbie, explaining the technology behind it all in a clear way for those of us who know nothing either.

It's horrifying and enlightening all the same time, told with a sense of humour and a touch of sarcasm. If you don't laugh when you read about the bionic penis, let me know.

I tried to explain the premise of the book to a room full of people, mostly men, who rolled their eyes at the idea that the fact "assistants", such as Siri, Alexa and Google Nest, are usually voiced by a woman actually meant something sinister.

But the book is so much more than that. It's full of examples of how design and technology are biased. Or at least not even thinking about humanity.

When a woman is involved in a car crash, she's 17 per cent more likely to die. It's all about for whom the car is designed. Women tend to be shorter so we sit further forward in an upright position to better see over the dashboard. That matters at impact. Further still, there wasn't even a female crash test dummy until 2022, courtesy of a Swedish engineering team led by Dr Astrid Linder.

In 2016, during one of North America's coldest winters, home thermostats controlled by Google Nest shut down, leaving home owners freezing. A software update was blamed, there was a nine-step process to fix it.

In 2017, Chukwuemeka Afigbo, a Nigerian tech worker tweeted a video of a "racist" soap dispenser at a Marriott Hotel: it works for a white person's hand but not a black person's. The dispenser uses infrared technology to detect when a hand is underneath. Darker tones absorb more light so there's not enough to activate the dispenser.

Spicer says one of the anecdotes she found really disturbed her was the story of Ghanian-American-Canadian computer scientist Dr Joy Buolamwini of the Algorithmic Justice League (and you have to love it there's a group called that), which does transformative work by challenging bias in decision-making software.

"She created this device called an Aspire Mirror where, say, young girls wanted to look up to Serena Williams and they'd see her face reflected back at them but the device wouldn't recognise her face until she put a white mask on.

"It mightn't sound like much but imagine what it means for facial recognition, or how data is collected and disseminated.

"That's what scares me the most is that these apps, these algorithms, they affect every aspect of our lives, from whether we get the job, to whether we can move country, whether we can get a ventilator or whether we could survive in a self-driving car."

Spicer, now 55, is a multiple Walkley Award-winning journalist who has anchored national programs for multiple networks. In 2018 she was named one of the Financial Review's 100 Women of Influence.

In late 2016, after 14 years with Network Ten, she was dismissed after returning from maternity leave after having her second child, Grace. Spicer claimed she was discriminated against, Ten denied the allegations and the case was settled out of court. In 2017 she released her autobiography, The Good Girl Stripped Bare, which looked at the inequities faced by women in not only the media, but in society as a whole.

"What I learned during the six-year odyssey writing this latest book changed my feminism forever," she says.

READ MORE KAREN HARDY:

There's a terrifying chapter, Coercive Control, about how technology can be used in abusive relationships. Abusers can see what their partners are watching, what they're asking Alexa about, randomly control things such as lights and heating remotely. Maybe they're using "creepware" to stalk people. In Hobart in 2019 a mechanic faced court for setting up an app to control his ex-girlfriend's car, not only could he track her movements, but he could suddenly stop the moving vehicle.

In a chapter called Virtual Rape she talks to Canberra journalist Ginger Gorman, who wrote the 2019 book Troll Hunting, about how cyberhate creates online engagement, which is exactly what the algorithms are after.

She does have a little bit of fun with the chapter on Sexbots. Every day there seems to be a new story about some man finding his AI soulmate. The first sexbot was created in the late 1970s. She was a 36C.

The first sexbot powered by AI, Roxxxy, debuted in 2010. Five distinct personalities help her interact with her "master", they include Frigid Farrah, Wild Wendy, Mature Martha. Young Oko is only just 18, her profile is "oh so young and waiting for you to teach her".

There have been significant advances in sexbots in recent years, Spicer writes, RealDollX comes with 11 styles of vagina inserts and a set of elf ears. It's programmed to make "appropriate noises".

"I'm assuming they have the ability to fake it, rather like Meg Ryan's character in When Harry Met Sally," says Spicer.

This chapter perhaps highlights the overall disconnect that's occurred in society. How we rely on apps to meet people online, how we've put the decision-making process in the hands of technology, how the whole idea of kindness and compassion doesn't fit into the equation that's driving the algorithm.

Spicer agrees. She knows it's impossible to banish technology from our lives entirely.

"It starts with something as simple as changing Siri's voice to a man's, thinking about where AI is embedded in our everyday lives," she says. "And then we need to remember we live in a democracy, we need to stir up our government to legislate for change. We need to get more women and girls involved in STEM and encourage them to change things at the programming level."

I think back to a few of my favourite quotes from Terminator. Sure there's the one that goes:

"F***ing men like you built the hydrogen bomb. Men like you thought it up. You think you're so creative. You don't know what it's like to really create something; to create a life; to feel it growing inside you. All you know how to create is death ..." via Sarah Connor.

But then there's this one which is a little more fitting.

"The unknown future rolls toward us," Sarah Connor says. "I face it for the first time with a sense of hope, because if a machine, a Terminator, can learn the value of human life, maybe we can, too."

Let's just hope we can learn the value of humanity.

- Man-Made: How the bias of the past is being built into the future, by Tracey Spicer. Simon & Schuster. $34.99.

We've made it a whole lot easier for you to have your say. Our new comment platform requires only one log-in to access articles and to join the discussion on The Canberra Times website. Find out how to register so you can enjoy civil, friendly and engaging discussions. See our moderation policy here.